Many twentysomethings want to spend their nights at a bar. Ivan Zhang wanted to spend his at Google.

It was 2018, and his friend Aidan Gomez was working for Google Brain, a research team dedicated to making machines intelligent so they could improve people’s lives. Zhang had dropped out of University of Toronto’s computer science program to work for a friend’s genomics company, but he still loved research—and the thought of publishing a paper as a dropout was appealing to him. “That spirit of learning never left,” he says.

After work, Zhang would often drop by Google Brain’s Toronto offices to hang out with Gomez. They’d study deep learning in the lab until about 1 a.m. They would feed computers large amounts of data to see if the machines could identify patterns and make predictions about what would come next. Zhang was around so often that one evening, Google engineering fellow Geoffrey Hinton—an AI superstar who’s often called the “godfather of deep learning”—ran into him. “The first thing he said to me was, ‘Hey, where’s your security badge?’”

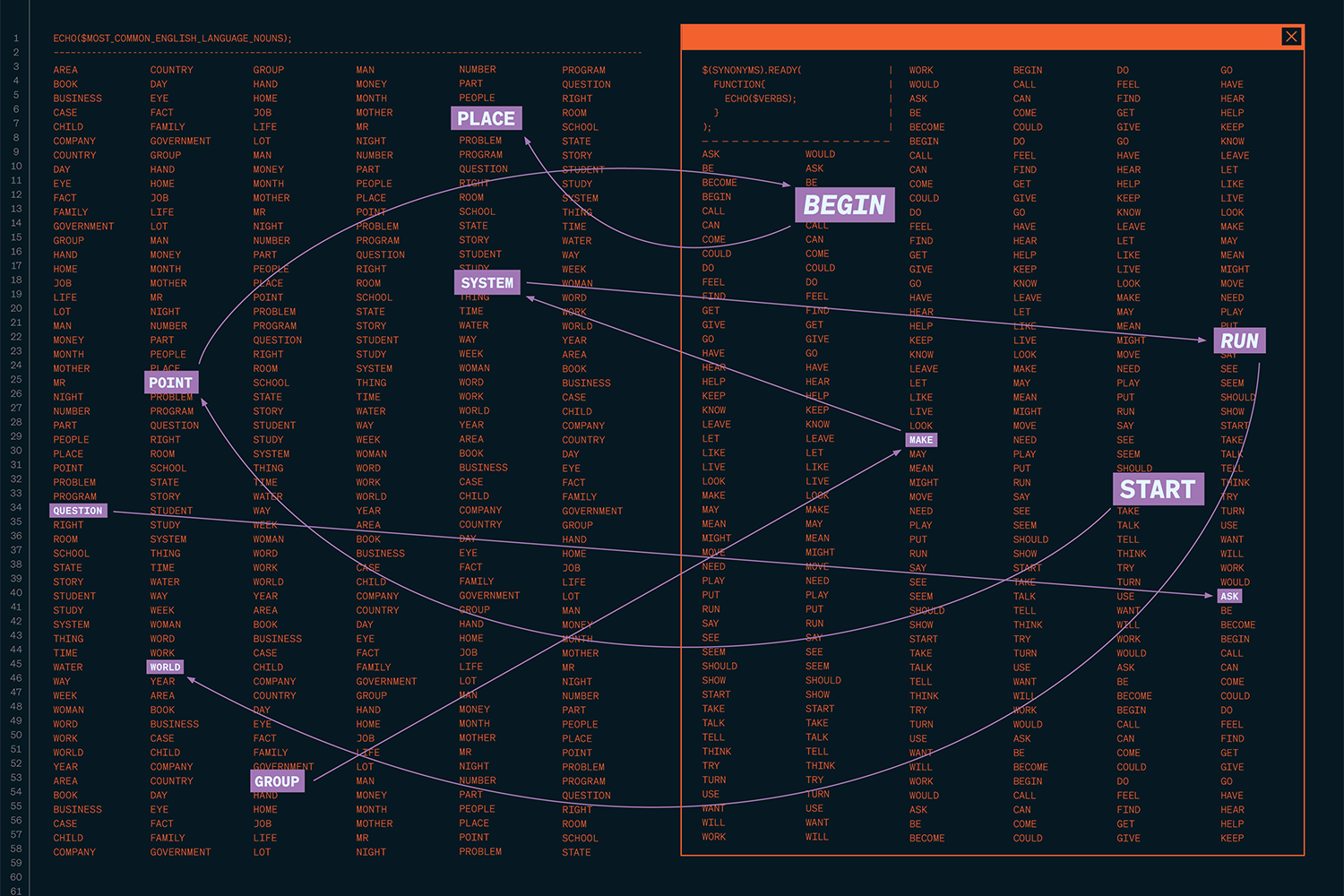

Gomez was particularly interested in one aspect of deep learning: natural language processing, a branch of artificial intelligence that teaches computers to respond to speech and text like humans. In 2017, he had co-authored a paper that posited that a transformer, a deep-learning model that can read large amounts of data at once, might do a better job understanding language than the technology used up until that point.

The concept was revolutionary. Because the transformer model looked at vast amounts of data at once, it could understand the full relationship between words in a sentence or paragraph. That meant it was less likely to misinterpret queries, and more likely to return text or speech that was easy to understand—just like a human voice.

By 2019, Zhang had a job at a machine-learning company, but he was itching to put into practice some of the experience he’d gained on the job and through the late-night research he’d done with Gomez. “That’s when I pitched the idea: We should start something,” says Zhang. “I didn’t know what, but I knew I didn’t know many people who had the same work ethic as me or Aidan.” Gomez agreed and told Zhang to start sending him ideas, even if they were trash, so they could come up with a viable business.

In the end, it all came back to transformers. A talk Gomez gave on AI in 2019 piqued the interest of members of the Toronto venture-capital firm Radical Ventures. They asked him if he had any cool start-up ideas. Gomez said yes: He could take the transformer architecture he wrote about a few years earlier and apply it to the entirety of the internet to teach machines how to speak like humans. The more information the transformer had to train on, the better it could do a wide range of tasks, like classifying data, generating content and even detecting toxic comments on social media.

Radical Ventures loved the idea and immediately wrote a seed cheque. In 2019, Gomez and Zhang launched their company, Cohere. Zhang quit his job and started building systems to grab data off the internet so he and Gomez could feed it to their models. By January of 2020, they were joined by another partner, Nick Frosst, and three additional engineers.

The company raised US$125 million in Series B funding earlier this year. It’s among the businesses and entrepreneurs who are leading research and development in natural language processing, a technology that is powering chatbots and moderating content, learning how to talk and text like people by absorbing all of the best and worst the human brain has to offer. It’s a technology already in the process of changing your life—even if you don’t know it yet.

Alan Turing, the famed British mathematician, famously proposed the notion that machines could think in 1950, when he published his seminal paper “Computing Machinery and Intelligence.” By 1952, physicist Charles Strachey had programmed a computer to independently learn how to play checkers. Advancements kept clipping along, and many AI scientists assumed that by 2000, computers would be sentient, talking machines, à la 2001: A Space Odyssey.

But to do all the amazing things scientists dreamed of, computers needed to comprehend and interpret human language. Herein lies the challenge, explains Ewan Dunbar, a professor of computational linguistics at the University of Toronto. “Language and speech are extraordinarily complicated,” he says.

Humans themselves still don’t fully understand how they give meaning to language, much less how to make computers fully understand it. Consider a simple sentence like “a dog chases a ball.” Do we imagine the dog performing the action? Does the word “chase” evoke different meanings depending on your lived experience? This is what we call “semantics”—the study and classification of changes in the signification of words. You can throw all the data you want at computers, but it’s difficult for them to understand the tone, context and meaning that most humans start to learn at birth. That’s why, so far, no machine has made it through the Turing Test, a tool devised by Turing that probes whether a computer can pass for a human.

Which isn’t to say there haven’t been significant leaps and bounds in AI and natural language processing since the 1960s. Anytime you’ve asked Siri to schedule a meeting on your iCalendar or told your Google Assistant to set the air conditioning to 20 degrees, you’ve been talking to artificial intelligence systems. But they aren’t sentient—they can’t think and feel for themselves. Siri knows how to answer questions and perform tasks because it’s an AI system that’s been trained on millions of pieces of data that help it better understand what you’re saying. However, it doesn’t get the complexity of language in the way that another human would. If you replied to Siri with sarcasm, it would not understand that you were being snarky because it doesn’t register that the change in tone changes the meaning of what you are saying.

In 2011, computer scientist Andrew Ng founded Google’s Deep Learning Project, which would eventually become Google Brain. His research focused on how to make machines process data the same way the human brain does, and he accomplished this by building neural networks that work similarly to our brains. Not only can they process a great deal of information, but they can react to it and remember it too.

For years, most natural language processes were trained on recurrent neural networks. These systems looked at data sequentially—one at a time—and retained memories of the meanings they learned. The technology was impressive, powering digital assistants like Alexa, but it was also imperfect. If you were to ask a virtual assistant “Why isn’t my printer connected to my computer?” it would read the word “printer” first without connecting it to the word “computer.” Common sense dictates we’re talking about a home printer. But virtual assistants don’t have any common sense. When it reads the word “printer,” it may track through many other meanings of the word. It won’t automatically know how to tell the difference between your home printer and a person who prints newspapers or a 16th-century printing press. “In order to be more like us, computers have to absorb all that stuff,” says Geoffrey Hinton.

Gomez was among a group of researchers at Google Brain who started looking at ways for AI to read much larger pieces of data so it could begin to understand the nuances of language. They landed on transformers, which analyze all the components in a body of text at the same time rather than one by one, as in previous models. Transformers use an AI mechanism called “attention,” which asks the model to determine which words are the most important and most likely to be connected.

A virtual assistant using a transformer will be better able to answer questions and follow commands. So if you ask “Why isn’t my printer connected to the computer?” it will connect the word “printer” to the word “computer,” eliminating other definitions of the term. It will not only know that you mean your home printer but also be able to respond in a way that is easy for you to understand—it might tell you in plain English that you need to connect the printer to wifi. The use of transformers will make it much easier for people, and eventually computers, to use: We’ll be able to ask computers questions the same way we ask Google questions.

Cohere, at first glance, has much in common with many other tech start-ups: three brilliant young men, one of them a dropout like Mark Zuckerberg, channelling their lifelong obsession with programming into a business.

Ivan Zhang came to computers in high school, when a friend pushed him into taking a computer science class. He was enchanted by how you could write a simple code and get a machine to do something for you. The first program he ever wrote was a chess AI—even though he didn’t actually know how to play chess.

Nick Frosst’s origin story begins at Snakes & Lattes, a board game café in downtown Toronto. He worked there throughout his undergrad studies, and one day he got into a conversation with a customer about the computability of a game he was playing. The customer turned out to be a University of Toronto computer science student, and he connected Frosst with John Tsotsos, a former U of T associate chair of computer science, who in turn hired Frosst as an undergraduate research assistant.

When he’s not working on making tech talk, Frosst is in an indie band called Good Kid, and he occasionally hosts sea shanty singalongs in an east-end Toronto bar. Gomez is still in school, juggling his work at Cohere with a doctorate progam in the philosophy of computer science at Oxford University. Zhang, meanwhile, recently got engaged. “We do our best work when we have lives that are full,” says Frosst.

All three are unfussy about the task at hand. They’re working on a fundamental problem: how to provide easy-to-use and affordable NLP products for a whole host of companies, big and small. Their tools are relatively straightforward. “If you know how to code, that should be all you need,” says Zhang.

To work effectively, transformers need to read tons of data so they can learn how to respond to people and perform commands. Google uses a transformer named BERT to support its search engine. BERT was fed all of Wikipedia, billions of books and scads more data from the internet. Transformers perform large matrix multipliers on huge amounts of data, which takes a ton of computing power and technical know-how. It’s not viable for small- to medium-sized businesses to do this themselves if they want to create a chatbot or sort information. It’s also prohibitively expensive.

Cohere’s transformer is also trained on a massive amount of information—billions of web pages from across the internet and discussion boards like Reddit. “We train transformers on terabytes of text, which is just a crazy amount—more text than any person has come anywhere close to reading ever in their entire lives,” says Frosst.

For the first few months of Cohere, the founders debated what exactly their business offering to clients should be. As they worked on training the transformer, the trio realized that what made this technology so powerful was that it could solve so many different problems. So they decided to create a platform that could be used as a plug-in by different companies. “If you have a natural language problem, you can come to Cohere, use a transformer and get a solution,” says Frosst.

The company began to offer products that either organized information (like identifying posts that violate community guidelines) or generated information (like writing content). An airline, for example, could use Cohere’s technology to sort customer complaints by category. A social-media network could use the transformer to flag abusive content. An online retailer could generate Instagram captions.

They operated in stealth mode for about a year, developing the technology without announcing they existed, so that they could launch when their business was further along. In 2021, the company emerged publicly, raising US$40 million in Series A funding led by Index Ventures. Sometime in near the beginning, they’d asked Hinton to invest, but he declined—he finds it a hassle to invest in early-stage companies. By 2021, observing how much they had grown, he decided to back them. “They were good people, and they were clearly making a success of it,” says Hinton, who notes that the Cohere team was among the first that realized that there was a big demand for natural language interfaces.

Several Canadian start-ups are working on AI and natural language processing. In 2022, five of them, including Cohere, made it onto CB Insights’ annual AI 100 list. Warren Shiau, research vice-president of AI and analytics at IDC Canada, says that funding in Canada tends to go to companies that provide infrastructure for other companies. That’s a big difference from the States, where companies are more likely to be working on disruptive technology with direct consumer uses.

Typically, the Canadian start-ups that get huge influxes of cash are those that are unrestricted by geography. “The applicability can go anywhere, and their backers are coming from everywhere,” Shiau says. Cohere, which is marketing itself as useful for all sorts of application, can work in any global market.

While many start-ups are focused on pursuing artificial general intelligence—in other words, sentient machines—Cohere is keeping its focus on language. “We don’t need to invent artificial general intelligence to get value out of artificial intelligence,” Zhang says. “We can get value out of natural language processing now.”

As with most inventions, AI’s biggest ethical threats come not from the technology itself but from the humans who created it. AI has long had the unfortunate habit of replicating the biases of its programmers. In the 1980s, St. George’s Hospital Medical School in the U.K. used an algorithm to screen applicants. The hope was that it would eliminate human mistakes. But instead, it amplified them: Students with non-European names had points deducted from their admission scores. This wasn’t a machine run amok—it was the result of the programmer codifying the biases already rooted in the school admissions department.

Transformers receive tons of information—but all of it is created by people, who have prejudices and pre-existing notions, which AI algorithms can (and do) replicate. In 2017, Amazon had to scrap a recruitment AI it was using to filter job candidates because it was biased against women. The technology had been trained on past resumés—most submitted by men. So the system taught itself that men were preferred candidates, and it filtered out any results that had the word “women” in it, like “women’s chess club captain.”

AI can also fail when its algorithms aren’t fed enough diverse information. Researchers have found, for example, that two benchmark facialrecognition software programs do a poor job at identifying anyone who isn’t Caucasian because their machines were only trained on white faces. A similar thing happened with Tay, a Microsoft chatbot designed to talk like a teen. As soon as trolls found it, it started saying racist and sexist things, largely in response to messages it was receiving. Much of the issue was because Tay had a “repeat after me” feature—clearly, Microsoft had not anticipated how ugly the internet can be.

Now, AI researchers are trying to figure out how to give algorithms fairness rules, which tell the machine that it is not allowed to make a decision based on certain properties, whether they’re words or other signifiers. The University of Toronto’s Ewan Dunbar says that classification—the sorting and labelling of information—is one area that has to be carefully vetted. “The systems are trained by looking at examples of what people have said,” he says, so they can replicate racial and gender bias. For example, two studies released in 2019 revealed that AI used to identify hate speech was in fact amplifying it. Tweets written by Black Americans were one and a half times more likely to be flagged as abusive; that rate rose even higher if they were written using African-American Vernacular English. Just as AI has trouble identifying sarcasm, it also does not always understand social context.

The good news is that researchers have identified a number of ways to test and train machines so they do not replicate bias, largely by ensuring that they are using the right data and constantly monitoring performance. Brian Uzzi, a professor at the Kellogg School of Management, suggests that blind taste tests—denying the algorithm information suspected of biasing the outcome and then seeing if it comes up with the same result—may help programmers identify biases and resolve them.

Cohere’s terms of service allow the company to end the client relationship if users deploy its tool for the “sharing of divisive generated content in order to turn a community against itself.” Both Frosst and Zhang are passionate about the potential for their technology to actually stop abuse on platforms. “We respect the power of our technology,” says Frosst. “We know that it can be used for a whole bunch of incredible things. And the flip side of that is it can be used for things that we wouldn’t be proud of.”

In February of 2020, Cohere signed a lease for an office in Toronto. Then came the pandemic. By July of 2020, when they could finally return to work in a limited capacity, they realized they had already outgrown their new home. They moved to a larger space that used to be Slack’s Canadian HQ.

At Cohere’s offices today, they’re getting ready to install a staircase so staff can move more easily between floors and get to the cafeteria a little quicker. It’s stocked with healthy snacks: Zhang and Gomez say they will eat anything that’s put in front of them, so they wanted to make sure the options available at work are good for them. (Espresso is plentiful, however, as is beer for afterwork socializing.)

The company currently has 72 people working in Canada, 15 in the United States and six in the United Kingdom. The US$125-million Series B funding they secured in February will allow Cohere to expand even further internationally. Former Apple director Bill MacCartney, who is now Cohere’s vice-president of engineering and machine learning, is leading the new Palo Alto office. It’s entirely possible, Zhang admits, that they will soon outgrow their new Toronto headquarters again.